Top 8 AI Tools for Personal Knowledge Management in 2026: Ranked by Actual Usefulness

From AI-powered note-taking to local LLMs that never touch the cloud, here are the 8 best AI tools for managing your knowledge in 2026 — ranked honestly.

Top 8 AI Tools for Personal Knowledge Management in 2026: Ranked by Actual Usefulness

Let's be direct: most "best AI tools" lists are just affiliate dumps dressed up with fake enthusiasm. This isn't one of those.

Personal knowledge management — the art of capturing, organizing, and actually using what you know — has been quietly transformed by AI over the past two years. But not every tool deserves your time, your data, or your subscription fee. Some are genuinely excellent. Some are overhyped. A couple are criminally underrated.

I've spent significant time with each tool on this list. The ranking reflects a single question: does this tool make me meaningfully smarter or more organized, in daily practice? Not in demos. Not in YouTube tutorials. In real work.

Here's what made the cut in 2026 — and why.

Quick Comparison Table

| Rank | Tool | Best For | Pricing | Privacy-Friendly? |

|---|---|---|---|---|

| 1 | Mem.ai | Automatic knowledge organization | Paid (from ~$14.99/mo) | Cloud-based |

| 2 | Obsidian | Power users, local-first notes | Free + paid plugins | ✅ Fully local |

| 3 | Rewind AI | Recalling anything you've seen | Paid (~$19/mo) | ✅ On-device |

| 4 | LM Studio | Running local LLMs privately | Free | ✅ Fully local |

| 5 | GPT4All | Offline AI chat with your docs | Free | ✅ Fully local |

| 6 | DeepSeek | Powerful reasoning, low cost | Free / API pricing | Partial |

| 7 | Mistral | Efficient European AI, API focus | Free / API pricing | ✅ Self-hostable |

| 8 | Grok | Real-time knowledge + X integration | Paid (X Premium+) | Cloud-based |

#1 — Mem.ai: The Best AI-Native Note-Taker

What it does: Mem.ai is a note-taking app built from the ground up around AI. There are no folders, no tags required, no manual organization. You write, and Mem's AI figures out how everything connects. It surfaces related notes automatically, lets you chat with your entire knowledge base, and drafts documents from your existing notes.

Best for: Professionals who write a lot and hate organizing things manually — consultants, researchers, writers, product managers.

Pricing: Free tier is limited (mostly a demo). The real product starts around $14.99/month for the AI plan, with team plans running higher. No lifetime option.

Why it's #1: The core premise — that you shouldn't have to organize your notes, the AI should — actually works in 2026. The semantic search is genuinely impressive. Ask "what did I write about pricing strategy last quarter?" and it finds it, even if you never tagged it. Competitors like Notion AI bolt AI onto an existing structure. Mem built the structure around AI. That's a meaningful difference.

The chat-with-your-notes feature is one of the best implementations I've seen. It cites specific notes, doesn't hallucinate connections, and actually helps you synthesize thinking rather than just retrieve text.

Pros:

- Zero-friction capture — just write

- Excellent semantic search across your entire history

- AI drafts that use your actual notes as source material

- Constantly improving; the 2026 version is significantly better than 2024

Cons:

- All your data lives on Mem's servers — a dealbreaker for some

- Can feel slow when your knowledge base gets large (10,000+ notes)

- No offline mode

- Pricing has crept up; the free tier is more teaser than tool

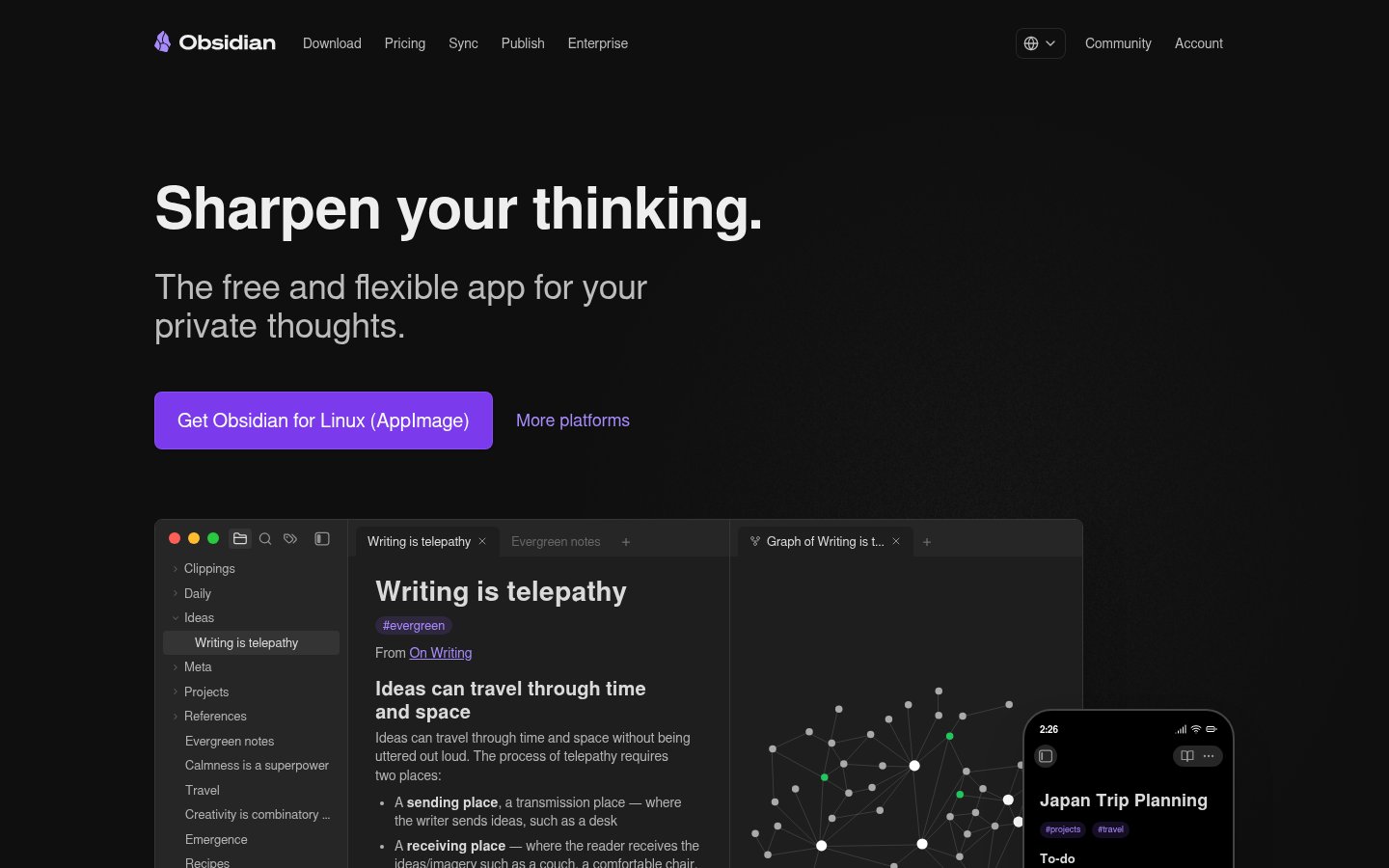

#2 — Obsidian: The Power User's Knowledge OS

What it does: Obsidian is a local-first Markdown editor that stores all your notes as plain text files on your machine. Its plugin ecosystem — over 1,000 community plugins — transforms it into anything from a daily journal to a full research database. AI capabilities come via plugins like Text Generator, Smart Connections, and the official Obsidian Copilot plugin.

Best for: Anyone who wants full ownership of their data, power users, developers, researchers, and privacy-conscious knowledge workers.

Pricing: Free for personal use. The commercial license is $50/user/year. Sync (their own cloud sync) is $10/month; Publish (web publishing) is $16/month. Plugins are community-built and mostly free.

Why it's #2: Obsidian isn't an AI tool by default — it's a platform on which AI tools can run. That distinction matters. With the right plugin setup, you get a local AI assistant that reads your vault, connects ideas via a graph view, and never sends your data to anyone. For security-conscious users, this is unbeatable.

The graph view — a visual map of how your notes connect — is still one of the most satisfying interfaces in knowledge work. It's not just pretty; it genuinely reveals relationships you didn't know existed.

That said, the setup barrier is real. Getting a good Obsidian AI workflow running takes hours, not minutes. If you want something that works out of the box, Mem.ai beats it handily.

Pros:

- Full local storage — your data, your machine, forever

- Extraordinarily extensible via plugins

- Works offline, always

- Plain text files = future-proof and portable

- Free for personal use

Cons:

- High setup cost; the learning curve is steep

- AI features require third-party plugins and usually API keys

- Mobile experience is functional but not polished

- No built-in AI — you're assembling your own setup

#3 — Rewind AI: The AI That Remembers Everything You Did

What it does: Rewind AI records everything you see, say, and hear on your Mac — every meeting, every browser tab, every document — and makes it all searchable. Ask "what was that pricing document I opened last Tuesday?" and it finds it. The AI can also summarize meetings you attended, recall decisions made in Slack conversations, and draft follow-up emails based on what was actually discussed.

Best for: Knowledge workers who spend most of their day in meetings, consultants managing multiple client relationships, anyone whose work involves synthesizing lots of information from many sources.

Pricing: Around $19/month (pricing has fluctuated; verify current pricing on their site). Mac-only. A Windows version has been in development for a while.

Why it's #3: The concept sounds dystopian but the execution is surprisingly thoughtful. Everything is processed on-device. Nothing is uploaded to Rewind's servers. For a tool that records literally everything you do, that's a critical design decision, and they've held to it.

The "meeting summarizer" alone is worth the price for heavy meeting loads. Instead of scrambling for notes at the end of a 90-minute strategy session, you have a searchable, summarized record. The recall feature has genuinely saved me hours tracking down specific pieces of information.

The limitation is platform lock-in (Mac only, for now) and the not-insignificant storage and processing overhead. Rewind can eat through disk space fast if you're not careful.

Pros:

- Genuinely novel — there's nothing quite like it

- All processing is on-device; strong privacy stance

- Meeting summaries are accurate and useful

- Search is fast and intuitive

Cons:

- Mac only (as of mid-2026)

- High local storage usage

- Recording everything feels excessive when you only want specific things

- Some video conferencing platforms flag it as a third-party recorder

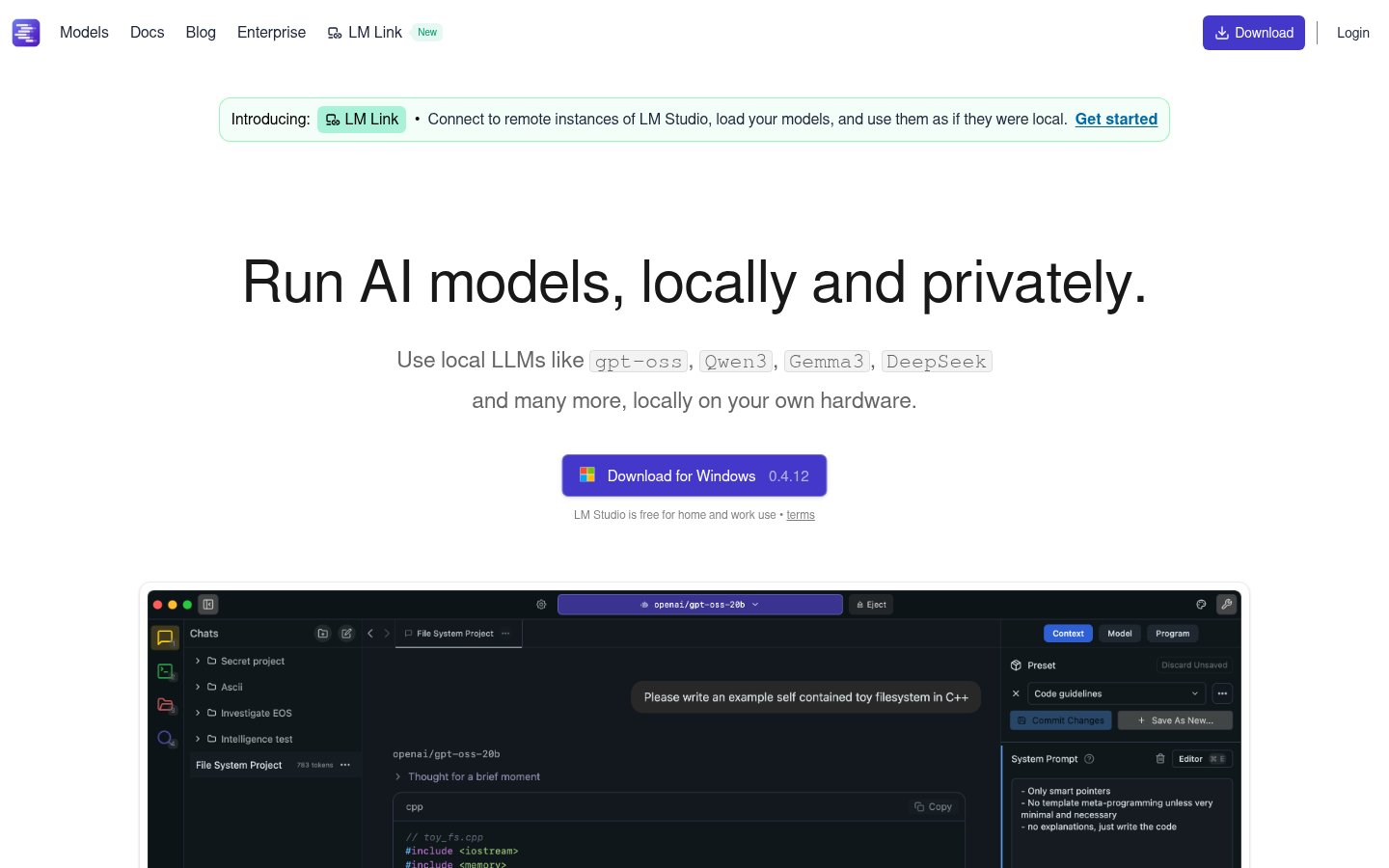

#4 — LM Studio: Run Any AI Model, Locally, Free

What it does: LM Studio is a desktop application that lets you download and run open-source large language models — Llama 3, Mistral, DeepSeek, Phi-3, and dozens more — directly on your computer. No API keys, no subscription, no data leaving your machine. It includes a chat interface, a local API server (OpenAI-compatible), and a model discovery browser.

Best for: Developers, privacy-focused professionals, and anyone who wants a capable AI assistant without sending their data to OpenAI, Anthropic, or Google.

Pricing: Free. Completely free. The business model is presumably enterprise tooling, but the core product costs nothing.

Why it's #4: LM Studio has quietly become the standard for running local AI in 2026. The interface is polished enough that non-developers can use it, but powerful enough that developers use it as a local API backend for their own tools. The ability to switch between models — try Llama 3 70B, then compare to a smaller Phi-3 Mini — in the same UI is genuinely useful for understanding model capabilities.

For knowledge management specifically, the "Chat with Documents" feature (via RAG — retrieval augmented generation) lets you load your own PDFs, notes, and files and query them with any model you choose. It's not as seamless as Mem.ai's implementation, but it's free and private.

Pros:

- Completely free

- Complete data privacy — nothing leaves your machine

- Supports hundreds of models; easy to switch

- OpenAI-compatible local API, great for developers

- "Chat with Documents" built in

Cons:

- Requires a reasonably powerful machine (16GB RAM minimum for decent models)

- Slower than cloud AI — latency is real on consumer hardware

- Model quality below GPT-4o and Claude 3.5 on complex reasoning tasks

- Setup is easy but not instant; first-time users need to understand model sizes/quantization

#5 — GPT4All: Offline AI That Actually Works for Normal People

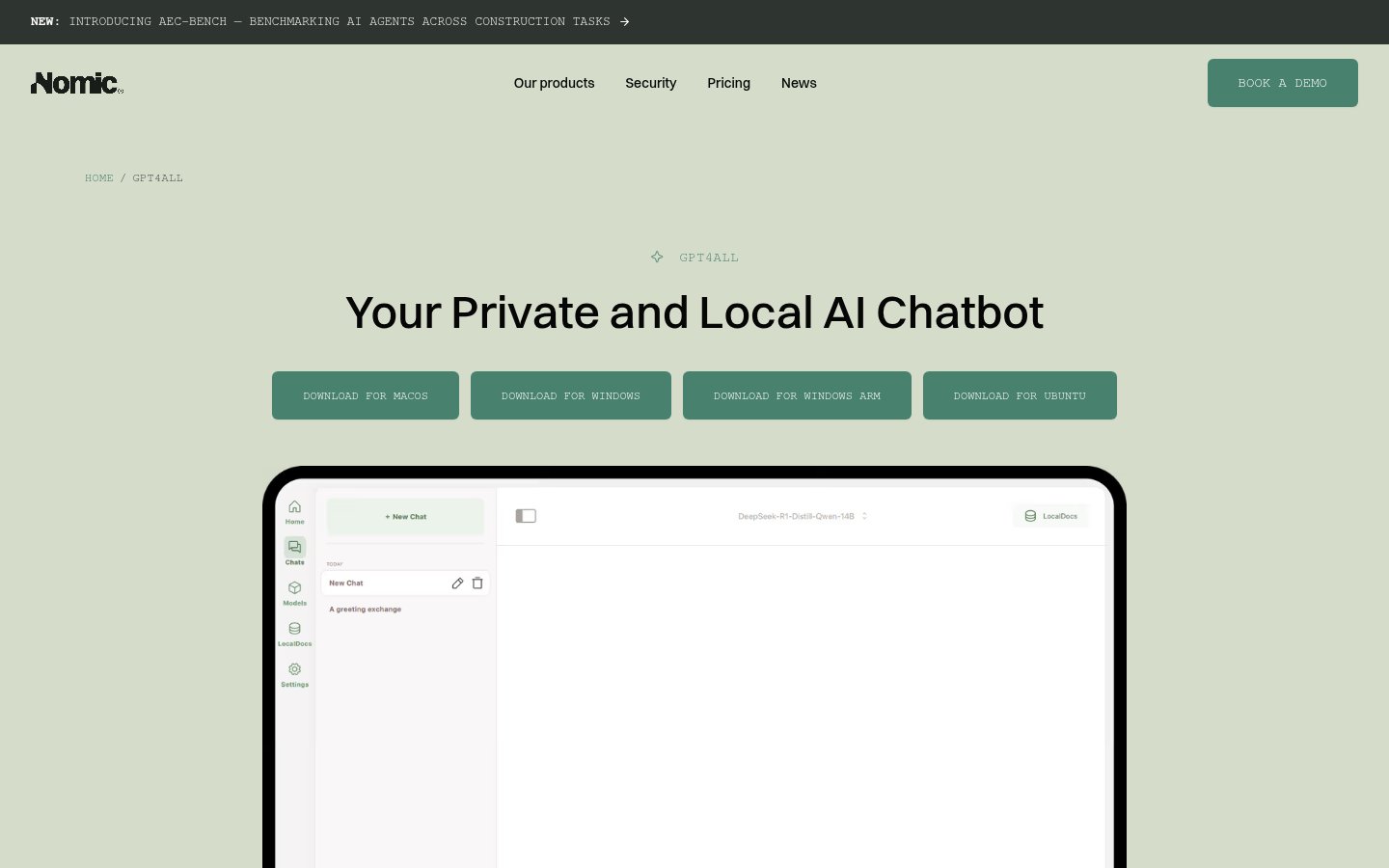

What it does: GPT4All, built by Nomic AI, is a free desktop application for running local language models. Like LM Studio, it lets you chat with AI offline — but it's designed for a less technical audience. The UI is simpler, the model selection is curated rather than exhaustive, and the "LocalDocs" feature lets you create collections of your own files that the AI can reference in conversation.

Best for: Privacy-conscious non-developers who want offline AI without technical complexity; small teams looking for a free, local AI solution.

Pricing: Free. Open source.

Why it's #5: GPT4All does what it promises without requiring any technical knowledge beyond clicking "download." For a knowledge management use case, the LocalDocs feature — where you point GPT4All at a folder of documents and it builds a local index — is the standout. You can chat with your entire Documents folder without anything leaving your machine.

It sits below LM Studio in this ranking because the model selection is narrower and the feature set is less comprehensive for power users. But for someone who wants offline AI and doesn't want to think about model quantization, it's the better starting point.

Pros:

- Truly beginner-friendly setup

- Strong privacy — fully offline

- LocalDocs feature is well-implemented

- Completely free and open source

Cons:

- Fewer model options than LM Studio

- Less powerful for developer use cases

- UI is simple to the point of occasionally frustrating

- Document indexing can be slow on large file collections

#6 — DeepSeek: Surprisingly Capable, Surprisingly Cheap

What it does: DeepSeek is a Chinese AI lab that released a series of open-weight models — most notably DeepSeek-V3 and the R1 reasoning model — that match or exceed GPT-4 class performance at a fraction of the API cost. The web interface is free; the API is priced at dramatically lower rates than OpenAI.

Best for: Developers building knowledge management tools on a budget; users who need strong reasoning and coding assistance.

Pricing: Free web access. API pricing is dramatically lower than competitors — roughly $0.27 per million input tokens for DeepSeek-V3 (vs. $2.50+ for GPT-4o). Specific pricing varies; check their site.

Why it's #6: DeepSeek's R1 model's reasoning capability is legitimately impressive for tasks like synthesizing research, breaking down complex problems, and generating structured knowledge from messy inputs. The cost advantage is real — if you're building something that makes many API calls, the price difference is enormous.

The privacy concern is real, though, and shouldn't be minimized. DeepSeek is based in China and subject to Chinese data laws. For personal notes and sensitive information, think carefully about what you send through their API. That consideration is why it sits at #6 despite strong technical capability.

Pros:

- Exceptional reasoning capability (R1 model especially)

- Dramatically cheaper API than OpenAI/Anthropic

- Free web interface is genuinely useful

- Open-weight models can be run locally via LM Studio

Cons:

- Chinese company — data privacy concerns are legitimate

- Web interface is basic compared to ChatGPT or Claude

- Less ecosystem integration than established players

- Context window and tool use still behind frontier models in some areas

#7 — Mistral: The European Alternative Worth Taking Seriously

What it does: Mistral AI, the French company, produces a family of efficient open and closed-weight models. Their flagship commercial models (Mistral Large, Mistral Small) compete with GPT-4 class systems, while their open models (Mistral 7B, Mixtral 8x7B) are among the most capable local models available. For knowledge management, their API integrates with tools like LM Studio and is GDPR-compliant by design.

Best for: European users with data compliance requirements; developers wanting a capable, self-hostable AI backbone; anyone who wants a credible alternative to American AI providers.

Pricing: Free tier via La Plateforme (their API platform). Paid API access from roughly €0.10–€4.00 per million tokens depending on model. Open-weight models are free to run locally.

Why it's #7: Mistral's value proposition in 2026 is clearer than ever: capable models, European data sovereignty, and genuine open-source commitment. For organizations where GDPR compliance isn't optional — healthcare, legal, finance — Mistral is often the practical answer that lets teams use capable AI without legal exposure.

As a pure knowledge management tool for individuals, it's more of an ingredient than a complete solution. You'd use Mistral's API to power something else (an Obsidian plugin, a custom RAG setup) rather than as a standalone product.

Pros:

- Strong GDPR compliance; EU-based data processing

- Open-weight models available for full local deployment

- Competitive performance, especially on European-language tasks

- Good API documentation and developer experience

Cons:

- No polished consumer-facing product (it's an API/model provider)

- Requires technical setup to actually use for knowledge management

- Smaller ecosystem than OpenAI

- La Plateforme interface is functional but not exciting

#8 — Grok: Real-Time Knowledge, With Caveats

What it does: Grok is xAI's conversational AI, built into X (formerly Twitter) and available as a standalone product. Its differentiator is real-time access to information from X — current events, trending discussions, public posts — combined with strong reasoning capabilities. Grok 3 (released in early 2025) is a significant step up from earlier versions.

Best for: Journalists, researchers, and analysts who need AI that has genuine knowledge of current events and can search live information.

Pricing: Requires X Premium+, which runs around $40/month (pricing has changed; verify current). Standalone Grok access pricing varies by region.

Why it's #8: Grok earns its spot for a specific use case: if your knowledge work requires being current, Grok's real-time X integration is genuinely useful. Ask it about a company's recent moves, a developing story, or what people in a particular field are saying right now — and you get answers grounded in actual recent data, not a training cutoff from 18 months ago.

For general knowledge management — organizing notes, synthesizing research, recalling your own information — it's not the right tool. The X dependency is a constraint, not a feature, for most workflows. And the $40/month price tag is high for what amounts to partial AI access.

It sits last on this list, but "last" here means "most specialized." If you live in real-time research, it punches well above its ranking.

Pros:

- Real-time knowledge from X is genuinely differentiated

- Grok 3 is a capable reasoning model

- Fun, less cautious personality compared to ChatGPT/Claude

- Deep Search feature is useful for research tasks

Cons:

- Requires X Premium+ subscription — expensive

- Tied to the X ecosystem and platform dynamics

- Not a knowledge management tool; more of a knowledge access tool

- Privacy considerations around X data practices

How to Choose: A Practical Decision Tree

Not sure which tool belongs in your workflow? Here's how I'd break it down:

- You write a lot of notes and hate organizing them → Start with Mem.ai

- You want full control and privacy above all else → Obsidian + AI plugins

- You want to remember everything you've ever seen on your computer → Rewind AI

- You want to run AI locally and you're comfortable with tech → LM Studio

- You want offline AI with minimal setup → GPT4All

- You need powerful reasoning at low API cost → DeepSeek

- You need GDPR compliance for a European context → Mistral

- You need AI that knows what happened last week → Grok

These tools aren't mutually exclusive. My own setup uses Obsidian as the primary vault, LM Studio for local queries, and Mem.ai for fast capture on the go. They solve different problems.

The State of AI Knowledge Management in 2026

The biggest shift over the last 18 months isn't model capability — it's that the privacy vs. capability tradeoff has narrowed dramatically. In 2024, running AI locally meant accepting severely degraded performance. In 2026, tools like LM Studio running Llama 3 70B or DeepSeek-R1 are genuinely good enough for most knowledge work tasks. You don't have to send your notes to the cloud to get AI that's useful.

That said, the cloud-based tools — Mem.ai especially — still offer a better product experience. The AI is better integrated, the UX is smoother, and the workflows are faster to get into. If you trust the provider, the cloud is still the path of least friction.

The question isn't really "which is the best AI tool for knowledge management?" The question is "what's your threat model and what's your workflow?" Answer those two things, and this list should make the choice fairly obvious.

FAQ

Is Mem.ai worth paying for in 2026?

If you write regularly and your work involves synthesizing lots of information — research, consulting, writing, strategy — yes. The automatic organization and AI-powered synthesis is genuinely better than anything you can build manually in Notion or Roam. The caveat is that your data lives on Mem's servers, so it's not appropriate for highly sensitive information.

Can I use these tools together, or do I have to pick one?

Absolutely use them together. Many serious knowledge workers use Obsidian as their local vault, Mem.ai for quick capture and AI synthesis, and something like LM Studio or GPT4All for offline AI queries. These tools solve different problems in the same general space.

Are local AI tools (LM Studio, GPT4All) actually private?

Yes, as long as you use local models and don't connect to any external API. Everything runs on your machine. The caveat is that some features in these apps can optionally use cloud services — make sure you're not accidentally enabling those if privacy is the priority.

Is DeepSeek safe to use for sensitive work?

For code, general research, and non-sensitive tasks — probably fine. For personal notes, client information, or anything under professional confidentiality obligations — I'd be cautious. DeepSeek is subject to Chinese law, which includes data access provisions that differ from EU/US standards. If you're using the open-weight models locally via LM Studio, the privacy concern disappears.

Why isn't ChatGPT or Claude on this list?

This list focuses on tools that are specifically valuable for personal knowledge management, especially those with local options, note-taking integration, or memory features. ChatGPT (OpenAI) and Claude (Anthropic) are excellent general AI assistants, but they're not knowledge management tools per se — they don't index your notes, they don't recall your history, and they don't organize your personal knowledge base. They're foundational models; this list is about the products and interfaces built around that use case.

What hardware do I need to run local AI tools like LM Studio?

Realistically: at minimum, 16GB of RAM and a reasonably modern CPU to run smaller models (7B parameters). For the better models (30B+), you want 32GB RAM and ideally a GPU — either a discrete Nvidia card or Apple Silicon with unified memory. An M2 MacBook Pro with 32GB RAM is currently one of the best value setups for local AI. Running larger models on CPU alone is possible but slow.

Sources

infobro.ai Editorial Team

Our team of AI practitioners tests every tool hands-on before writing. We update our content every 6 months to reflect platform changes and new research. Learn more about our process.